Target Alignment for a Robotic Arm via Reinforcement Learning

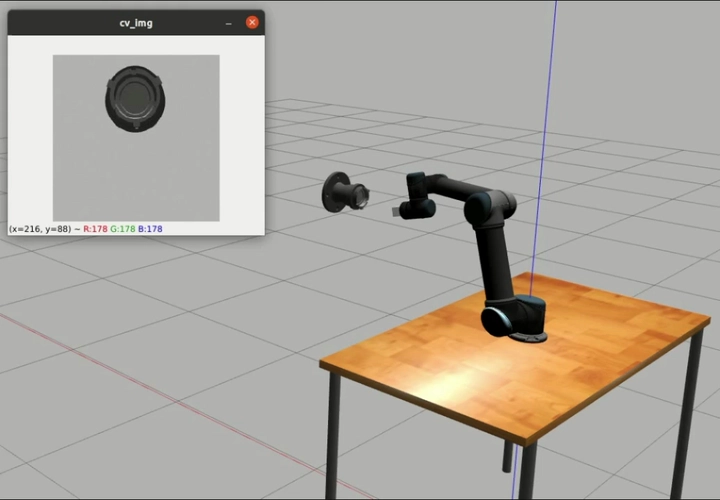

Simulation environment for robotic target alignment

Simulation environment for robotic target alignment

Overview

This project studies robotic target alignment with reinforcement learning. The goal is to control a UR5 manipulator to align its end-effector with a target object of unknown pose. The work was completed as a team project with Jianlin Ye, Guanyu Yao, and Fangzhou Ye, advised by Prof. Sheng Han and Prof. Kai Lv.

Approach

We built a task-specific simulation environment for the alignment problem, with multimodal observations including RGB, depth, and robot state. To make learning more effective, the method combined structured visual features, imitation learning, curriculum learning, and a PPO-based control policy.

UR5 manipulator model used in the environment.

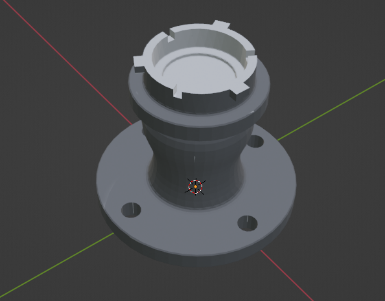

Target object model for alignment.

Simulation demo of the alignment environment and control process.

Results

The project produced a working simulation environment, a visual representation for alignment, and a PPO-based policy for the task. We also validated the setup with physical-system experiments.

Close-up simulation clip.

Physical-system demo.