VR Surfing Hand-Tracking Control

Overview

This project explores VR surfing interaction with a VR headset and a motorized mechanical surfboard platform designed to simulate the body motion of real surfing. The main goal was to create a more immersive and physically engaging surfing experience by combining virtual-environment feedback with real-time body tracking and motion control.

The project was completed in collaboration with Premankur Banerjee, and was advised by Heather Culbertson and Jason Kutch at USC.

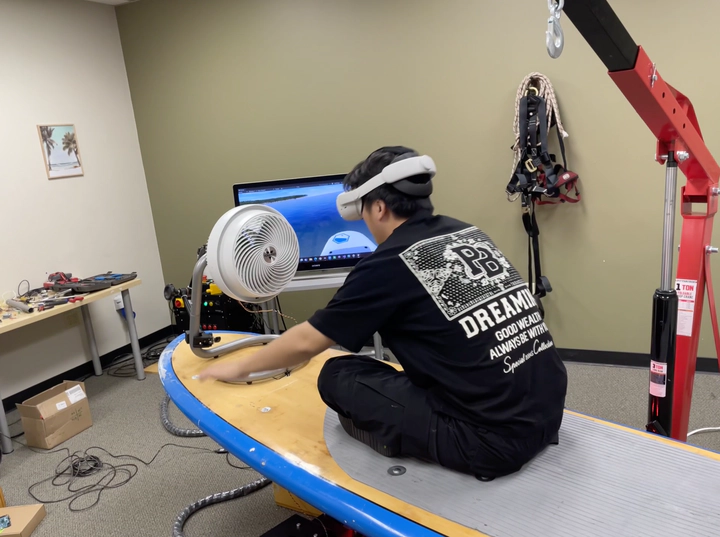

Experimental environment for the VR surfing setup.

System Design and Results

The overall system integrated visual perception, motion interpretation, and physical actuation into one interactive loop. The VR headset provided the immersive surfing scene and real-time environmental feedback, while the hand-tracking module extracted user motion from joint landmarks. The control logic mapped hand movement and virtual water-level variation to board commands, and a controllable mechanical surfboard executed the commanded movement to reproduce wave-like motion.

To enable natural interaction, we built a MediaPipe-based full-body joint tracking system with a particular focus on hand landmark detection. The user’s hand motion was interpreted together with changes in the virtual environment’s water level, and this relationship was used to control the movement of the surfing board. By coupling perception and actuation in real time, the project created a closed-loop VR control system that translated body motion into physical surfing feedback.

Full-body joint detection and tracking demonstration.

The project produced a working prototype for hand-tracking-based VR surfboard control, demonstrating that body-joint tracking can be used to drive a motorized platform in coordination with a virtual environment and helping reproduce part of the dynamic feeling of real surfing.

The main outcomes included:

- a MediaPipe-based full-body and hand-joint tracking system for VR interaction,

- a control method that combines hand motion and virtual water-level changes,

- a real-time interface between the virtual surfing environment and a motorized mechanical surfboard,

- and a prototype immersive experience designed to simulate the kinesthetic feel of surfing.

More broadly, the project showed how computer vision, VR interaction, and human-centered control design can be combined to create embodied motion experiences beyond standard controller-based interfaces.

VR surfing board system in operation.